In recent years, large language models (LLMs) have revolutionized the field of natural language processing (NLP). These powerful AI models can generate human-like text, answer questions, and perform various language-related tasks with remarkable accuracy.Techniques for writing prompts are crucial for effective LLM interaction. They guide the model’s output, enabling more relevant and coherent responses.

Well-crafted AI prompts evoke desired information, maintain context, and steer the conversation. It helps users harness the full potential of LLMs, leading to enhanced performance and user satisfaction.

What is prompt writing?

The technique of writing prompts is the process of crafting input text or instructions that are fed into a language model to guide its output and behaviour. It involves carefully selecting words, phrases, and structures to provide clear, concise, and context-rich instructions to the model. For additional guidance and examples, resources like https://paperwriter.com/ can be helpful in understanding how to refine prompts effectively. The goal is to elicit relevant, coherent, and high-quality responses from the language model based on the given prompt.

Writing prompts is crucial for harnessing the full potential of large language models (LLMs). Well-crafted prompts help to guide and constrain the model’s output, ensuring that it stays on topic and generates responses that are relevant to the user’s intent. By providing clear instructions and context within the prompt, users can fine-tune the model’s behaviour, obtain more accurate and targeted responses, and mitigate issues such as hallucinations or irrelevant outputs. Effective techniques for writing prompts allow users to leverage the vast knowledge and capabilities of LLMs for a wide range of applications, such as question-answering, content generation, analysis, problem-solving, and more.

In the next segment, we will explore the five essential techniques for writing prompts used in LLMs:

- Role Prompting

- Few-Shot Prompting

- Chain of Thought Prompting

- Generated Knowledge Prompting

- Self-Consistency

Techniques for Writing Prompts

There are several techniques you can use for writing prompts, but not all of them prove to be beneficial. Therefore, to save you the trouble of having to try and test each technique, here is a list of techniques that have been proven effective as well as efficient.

1. Role Prompting

Role prompting is a technique for writing prompts, for assigning a specific role to the AI model. This involves writing prompts starting with a role instruction such as “You are a prompt engineer” or “You are a physics professor,” and then posing a question that the AI is supposed to respond to within the context of that role.

The idea is to give an AI model additional context by assigning it a specific role to help it better understand the question, often resulting in more efficient and accurate answers.

In this example, shown in Figure 1, the language model is assigned the role of a physics professor named Samantha. The prompt provides background information about Samantha’s knowledge and role. It also defines Samantha’s goals, communication style, and behavior when interacting with the students.

Figure 1: Example of Role Prompting

With this role prompt, the language model will aim to respond to user queries as if it were Samantha, the physics professor. It will draw upon the provided knowledge and communication guidelines to offer relevant information.

You can also check out the blog on How to use OpenAI’s GPT 3.5 to annotate News Data for an example on how the Role Prompting works.

Moreover, to help you know more about the role-prompting technique for writing prompts, here is a table drawing out the benefits and drawbacks of this technique.

Benefits | Drawbacks |

|---|---|

| Defining a clear role, persona, knowledge base, and objectives for the AI assistant can significantly enhance the quality and relevance of its outputs for the given task. | Restrictive role prompts could limit the AI's ability to engage broadly and draw upon its full knowledge and capabilities. |

| Role prompting helps ensure the AI responds in a consistent manner that aligns with the defined persona. | It does not provide foolproof behavioural control over LLMs. Some sufficiently capable AI prompts may find clever workarounds to satisfy the literal prompt while still engaging in unintended ways. |

| Careful role prompting can embed guidelines for ethics, safety, truthfulness, and alignment with human values into the AI's core persona, promoting safer and more trustworthy AI assistants. | Prompts that contain factually incorrect information about the AI's characteristics could lead to inconsistencies in confusion in its responses as it attempts to fulfil that role. There is also a risk of misuse, such as writing prompts that portray the AI in deceptive or dangerous roles. |

| Role-based AI assistants can provide tailored support and expertise in specialized fields such as healthcare, legal services, and engineering by pre-loading domain-specific knowledge and goals. | Heavy reliance on prompting could lead to brittleness, where the AI fails gracelessly outside of narrowly defined roles. Robust general intelligence is still needed. |

2. Few-Shot Prompting

Few-shot prompting is another strategy of writing prompts, where a model is provided with a small set of instances (or shots) to showcase the desired task or output. This enables the LLMs to grasp the expected behaviour or outcome, even when confronted with new, similar inputs, despite having limited context. This approach is also used to humanize AI text by mirroring human-like patterns.

Therefore, it is a valuable technique in NLP, particularly when labelled data is scarce or when quick adaptation to new tasks is required.

In the example given below, the model is provided with three examples of movie reviews along with their sentiment. The model is then asked to classify the sentiment for the next unlabeled reviews (Review 4) based on the patterns it has observed in the few-shot examples.

Figure 2. Few-Shot Prompting

I generally call the few-shot prompting “N-shot prompting,” where N is the number of examples (or shots) provided to the model. If we provided a single example, it would be called “one-shot prompting.” It is a more precise way to describe the specific number of demonstrations provided to the language model during the prompting process.

Moreover, to help you know more about the few-shot technique of writing prompts, here is a table drawing out the benefits and drawbacks of this technique.

Benefits | Drawbacks |

|---|---|

| It enables models to learn from minimal examples, often just a few, rather than needing extensive datasets. This enhances sample efficiency and enables quick adaptation to new tasks. | The quality and clarity of the writing prompts are crucial. If the examples are ambiguous, inconsistent, or fail to cover the edge cases, the result of the model will be poor. |

| With few-shot prompting, the same base model can be used for many different tasks just by providing a few examples in the prompt. This flexibility means models can be rapidly adapted to novel domains and applications. | There is limited ability to control the model's behaviour beyond the provided examples, which may lead to generalization. |

| The few-shot technique for writing prompts improves performance on unseen tasks, known as zero-shot learning, by incorporating relevant examples in the prompt. This helps models better understand tasks and make accurate predictions for new data. | It relies heavily on the base model's existing capabilities. It doesn't fundamentally expand what the model can do; it just recombines it in new ways. |

| Humans can learn from a few examples and generalize to new tasks rapidly. Few-shot prompting helps narrow the gap between AI and human-like task flexibility and efficiency. | For complex tasks, a few demonstrations may not be sufficient to fully specify the behaviour. The model can fail to infer the full scope and nuance of the task. |

3. Chain of thought Prompting

The Chain of Thought method is crucial for crafting considerate and significant replies. This technique for writing prompts navigates the model through a systematic thinking process—the exploration of concepts, ideas, or problem-solving approaches one step at a time.

Furthermore, this approach of writing prompts entails dissecting a complex subject or problem into smaller, digestible parts that prompt the model’s logical thinking to unfold systematically, resulting in a cohesive and organized reply. It’s akin to guiding the model through a cognitive expedition, where ideas and concepts are linked in a coherent and significant sequence.

Figure 3: Example of CoT Prompting

Moreover, to help you know more about the chain of thought technique of writing prompts, here is a table drawing out the benefits and drawbacks of this technique.

Benefits | Drawbacks |

|---|---|

| CoT technique for writing prompts encourages the language model to break down complex problems into smaller, more manageable steps, leading to more accurate and logical conclusions, mimicking the human thought process. | Generating step-by-step reasoning requires more computational resources and time compared to producing a single, direct response. |

| With the step-by-step approach provided by CoT prompting, LLMs can tackle a wider range of problems, including those that require multiple steps or complex reasoning. | If the model is trained on a limited set of reasoning patterns or problem-solving approaches, it may struggle to generate appropriate chains of thought for unconventional problems. |

| It helps users understand the language model's decision-making process by providing intermediate reasoning steps, increasing transparency and trust in the model's outputs. | The model may generate unnecessary steps or redundant information, leading to longer, less concise responses that are harder to interpret. |

| By breaking down the problem-solving process into smaller steps, CoT prompting can help reduce errors and improve the overall accuracy of the language model's outputs. | Some domains, such as creative writing, personal opinions, or subjective decision-making, may not benefit as much from this. In these cases, the step-by-step reasoning process may not be as relevant or effective in generating appropriate responses. |

| It can be utilized in various fields, including mathematics, science, and logic-based problem-solving. This adaptability enables language models to be applied in a wide array of scenarios, from answering inquiries to resolving intricate issues across different disciplines. | If the training data contains biased or misleading reasoning patterns, the model may learn to generate CoT that memorialises these biases or leads to incorrect conclusions. |

4. Self-Consistency

The Self-Consistency technique of writing prompts is a method used to encourage individuals to consider their beliefs, values, and actions about their self-concept. Moreover, it prompts reflection on whether their current behaviour aligns with their internalized sense of identity and values.

Figure 4: Example of Self-Consistency Prompt

This prompt encourages introspection and self-awareness, prompting individuals to evaluate their actions in light of their self-identity and values, thus promoting consistency between behaviour and self-concept.

Moreover, to help you know more about the self-consistency technique for writing prompts, here is a table drawing out the benefits and drawbacks of this technique.

Benefits | Drawbacks |

|---|---|

| The self-consistency technique of writing prompts improves factual accuracy by prompting the model to cross-check its responses against its knowledge base, reducing hallucinations and improving output accuracy. | The technique of writing prompts may not fully capture the complexity of human behaviour, as it primarily focuses on internal consistency, potentially leading to an incomplete understanding of why individuals act in certain ways. |

| The self-consistency technique of writing prompts increases coherence by encouraging the model to generate internally consistent and logically coherent responses, avoiding contradictions or nonsensical statements. | Individuals may tend to provide responses that adhere to societal norms or their desired self-image rather than reflecting genuine self-awareness. This can lead to a gap between stated beliefs and actual behaviour, which undermines the effectiveness of the technique in writing prompts for authentic self-reflection. |

| Through this technique of writing prompts, the model can recognize and rectify its errors or inconsistencies by comparing its responses to its foundational knowledge. This allows for self-correction and iterative improvement. | The technique's effectiveness depends on individuals' willingness and ability to engage in honest self-reflection. However, not everyone has the skills or motivation for this, limiting the technique's applicability and impact. |

| The self-consistency technique of writing prompts enhances the reliability and trustworthiness of the model's responses for applications where consistency is crucial, such as question-answering systems or knowledge retrieval. | |

| The self-consistency technique of writing prompts helps ground the model's responses in its trained knowledge base, reducing the tendency to generate unfounded speculations or irrelevant information. This promotes outputs that are more closely tied to the model's learned facts and relationships. |

5. Generated Knowledge prompting

The generated knowledge technique of writing prompts enables the models to integrate or incorporate previous knowledge to improve the results. To do so, a user needs to generate knowledge by prompting helpful information about the topic and then leverage this knowledge to generate a final response.

Figure 5: A Depiction of Generated Knowledge Prompting

For example, if we want a language model to write an article on cybersecurity, we will first prompt the model to generate some facts, types, or techniques for cybersecurity. As a result, the model will generate a more effective response by integrating the provided knowledge before the final prompt, as illustrated below:

Figure 6: Example of generated knowledge prompting

Moreover, to help you know more about the generated knowledge technique of writing prompts, here is a table drawing out the benefits and drawbacks of this technique.

Benefits | Drawbacks |

|---|---|

| It can generate relevant knowledge for various tasks and datasets using just a few human-written demonstrations, without needing task-specific templates or generators. | If the language model lacks access to reliable data on a subject, the information it generates regarding that topic may be unreliable. |

| It leverages implicit knowledge stored in large, pre-trained language models, rather than depending on a structured, high-quality knowledge base relevant to the task's domain. | It can be computationally costly, particularly for large and complex tasks. |

| It substantially improves the performance of both zero-shot and fine-tuned language models on multiple commonsense reasoning benchmarks. It achieves state-of-the-art results on 3 out of 4 tasks evaluated - numerical commonsense (NumerSense), general commonsense (CommonsenseQA 2.0), and scientific commonsense (QASC). | If the language model cannot produce sufficient information for a task, the Generated Knowledge Prompting (GKP) approach of writing prompts won't effectively enhance the language model's performance on that task. |

| The generated knowledge makes the reasoning process of language models more interpretable. Human evaluation shows that GKP frequently transforms implicit reasoning into explicit step-by-step procedures supported by the language model, such as paraphrasing, induction, deduction, analogy, etc. | There is still much to know about this technique of writing prompts and how to use it. |

| Using GKP, smaller language models achieve comparable or even better performance than much larger models without GKP. For example, T5-large with GKP outperforms the GPT-3 baseline on NumerSense. |

While the techniques for writing prompts discussed above—role prompting, few-shot prompting, chain of thought prompting, self-consistency, and generated knowledge prompting—are some of the most important and widely used approaches, various other prompt techniques for writing prompts can be effective in eliciting desired behaviors and responses from large language models.

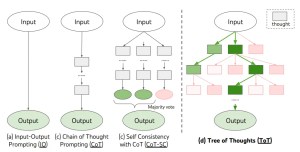

Prompting techniques like instruction prompting, attribute prompting, tree of thought, active prompting, and many more are not covered. Each of these techniques has its strengths, limitations, and use cases. However, exploring and experimenting with different approaches for writing prompts can help unlock the full potential of language models for a wide range of applications.

6. Tree-of-Thought prompting

Tree-of-Thought prompting is an advanced prompt engineering framework designed to improve a large language model’s ability to perform complex reasoning, planning, or search tasks.

Instead of following a single linear chain of reasoning (as in Chain-of-Thought prompting), ToT allows the model to generate multiple intermediate “thoughts” or partial solutions, forming a tree structure of possibilities.

At each step, multiple candidate thoughts are explored; unpromising branches are pruned, and promising ones are expanded further. Sometimes, lookahead or backtracking is used to compare branches and choose the best path toward the final solution.

The “thoughts” are coherent units of reasoning (they might be equations, ideas, paragraphs, etc.), depending on the task. ToT generalises over CoT (Chain-of-Thought) by introducing branching, evaluation, pruning, and strategy over paths.

| Benefits | Drawbacks |

|---|---|

| Improving problem-solving on complex tasks: ToT enables exploring multiple paths, often leading to better and more accurate solutions for tasks like puzzles, planning, and mathematical reasoning. | High computational cost: Generating many candidate thoughts, keeping track of branches, evaluating/pruning adds overhead in CPU/GPU time, and memory. |

| Reduced risk of errors from early bad choices: Since ToT can backtrack or prune bad paths, it avoids committing too early to flawed chains. | Implementation complexity: Requires designing prompts/templates for generating thoughts, scoring/evaluation criteria, and possibly integrating search algorithms. More engineering than simpler prompting. |

| Flexibility and adaptability: Can tailor branching factor, depth, and evaluation criteria depending on resource constraints or the nature of the task. | Latency/speed issues: More steps and evaluations mean slower response times, which may be problematic for interactive applications. |

| Better exploration/creativity: Especially in open-ended or creative tasks, multiple branches mean exploring more diverse solutions. | Diminishing returns: After a certain depth or branching, the added value of exploring extra paths may reduce, especially when many branches are similar or low quality. |

| More transparency/interpretability: Intermediate thoughts/branches can be inspected to understand how the model arrived at the final solution. | Resource constraints: Not all environments allow running large LMs with enough compute to maintain the branching and backtracking required. |

7. Few-Shot with Chain-of-Thought Prompting

Few-Shot with Chain-of-Thought Hybrid is a prompting method that combines the strengths of few-shot prompting (guiding the model with examples) and chain-of-thought prompting (asking the model to reason step-by-step).

Instead of just showing input-output pairs, this method provides examples that explicitly include reasoning steps. By doing so, the model learns not only the format of the output but also the reasoning pattern required to solve similar tasks.

This hybrid is particularly effective for complex reasoning problems where both demonstration and step-by-step reasoning improve performance.

EXAMPLE: Q: If a train travels 60 km in 1 hour, how far in 4 hours?

A: Step 1: Speed = 60 km/h. Step 2: Distance = 60 × 4 = 240 km. Final Answer: 240 km.

Q: A bag has 3 apples and 2 oranges. If you add 2 more apples, how many apples will you have?

A: Step 1: Initial apples = 3. Step 2: Add 2. Total = 5 apples. Final Answer: 5.

Q: A farmer has 10 cows. He buys 5 more. How many cows total?

A:

The model continues in the same reasoning pattern.

| Benefits | Drawbacks |

|---|---|

| Combines the guidance of few-shot with the clarity of CoT. | Needs carefully designed examples, harder to prepare. |

| Improves consistency in reasoning and accuracy. | Longer prompts → higher token usage and cost. |

| Works well for reasoning-heavy tasks (math, logic). | May still fail if examples are too narrow or biased. |

| Easy to adapt: just annotate a few-shot examples with reasoning. | Not ideal for very open-ended tasks like creative writing. |

Benefits of writing a good prompt

There are several reasons why writing a good AI prompt is beneficial. Mentioned below are some of the key benefits of writing prompts that are effective.

Clarity:

Writing prompts that are effective, ensures that AI knows exactly what is expected of them and removes excess information. Thus ensuring the delivery of clear content to the user.

Encourages creativity and innovation:

A well-written AI prompt can act as a much-needed push for sparking creativity and new ideas. It also helps you move past the writer’s blog that humans face from time to time.

Effective time utilization:

Writing prompts that are effective ensures the delivery of relevant and accurate information, which in turn saves time by minimizing misunderstandings and encouraging in-depth engagement. Well-structured prompts also help language models humanize AI-generated text, producing responses that sound clearer and more natural for users.

Increased work efficiency:

Quality of writing prompts stimulates critical thinking, improves problem-solving, and facilitates better communication, ultimately leading to more productive and meaningful interactions.

Conclusion

Techniques for writing prompts are a powerful tool for guiding and optimizing the performance of large language models. By carefully crafting prompts that provide context, examples, reasoning steps, knowledge integration, and more, we can elicit more accurate, relevant, and insightful responses from these AI systems.

The five key techniques of writing prompts covered here—role prompting, few-shot prompting, chain of thought prompting, self-consistency prompting, and generated knowledge prompting—offer a strong foundation for effective LLM interaction. However, the field of prompt engineering is rapidly evolving, with new techniques and best practices emerging regularly.

Also, to get the most out of language models, it’s important to stay informed about the latest advances, experiment with different approaches, and tailor prompts to the specific needs of each use case. Furthermore, by mastering the art and science of writing prompts, we can harness the immense potential of language models to solve complex problems, generate valuable insights, and push the boundaries of what’s possible with AI.

Frequently Asked Questions

Q1. How to write AI prompts?

Creating AI prompts involves providing clear instructions to guide the AI’s responses. Start by defining the task, then offer relevant context and any necessary constraints or guidelines. If helpful, include examples of the desired output. Furthermore for multi-step or complex tasks, break them down into manageable parts. Anticipate potential ambiguities or misinterpretations in your prompt, and refine it via iteration based on the responses.

Q2. What is prompt writing?

Prompt writing is the process of crafting input text or instructions that are fed into a language model to guide its output and behaviour. Moreover, it involves carefully selecting words, phrases, and structures to provide clear, concise, and context-rich instructions to the model.

Q3. What are the different techniques for writing prompts?

There are several techniques for you to choose from for writing prompts, however the best five are mentioned below.

- Role Prompting

- Few-Shot Prompting

- Chain of Thought Prompting

- Self-Consistency

- Generated Knowledge Prompting

Q4. Why are writing prompts important in AI?

Writing prompts are crucial in AI because they provide context and guidance for generating coherent and relevant text. They help narrow down the vast possibilities of language generation, allowing LLMs to focus on specific topics, styles, or formats. Furthermore, prompts enable the models to produce more targeted and meaningful output.

Q5. What are the challenges associated with using AI prompts?

Challenges with AI prompts include generating coherent, accurate, and relevant responses, handling complex or ambiguous queries, and avoiding biased or harmful outputs. Moreover, AI prompts also struggles with tasks requiring deep understanding, reasoning, or domain-specific knowledge. Ensuring transparency and accountability in prompt-based AI systems is another significant challenge.

Q6. What are the benefits of writing good AI prompts?

Writing good AI prompts enhances clarity, fosters creativity, and shows effective responses. It ensures relevant and accurate information, saves time by reducing misinterpretations, and promotes in-depth engagement. Furthermore, writing prompts produce critical thinking, enhance problem-solving, and facilitate better communication, ultimately leading to more productive and meaningful interactions.

Aditi Chaudhary is an enthusiastic content writer at Newsdata.io, where she covers topics related to real-time news, News APIs, data-driven journalism, and emerging trends in media and technology. Aditi is passionate about storytelling, research, and creating content that informs and inspires. As a student of Journalism and Mass Communication with a strong interest in the evolving landscape of digital media, she aims to merge her creativity with credibility to expand her knowledge and bring innovation into every piece she creates.